Whenever SQL Server needs to sort a data stream, it will use the Sort operator to reorder the rows of the stream. Sorting data is an expensive operation because it entails loading part or all of the data into memory and shifting that data back and forth a couple of times. The only time SQL Server doesn’t sort the data is when it already knows the data to be ordered correctly, like when it has already passed a Sort operator or it’s reading from an appropriately sorted index.

But what happens if the data is ordered correctly, but SQL Server doesn’t know about it? Let’s find out.

Some demo data

Here are three tables with about 33.5 million rows each.

DROP TABLE IF EXISTS #work;

DROP TABLE IF EXISTS #work_reversed;

DROP TABLE IF EXISTS #work_randomized;

CREATE TABLE #work (

a int NOT NULL,

b int NOT NULL,

PRIMARY KEY CLUSTERED (a)

);

CREATE TABLE #work_reversed (

a int NOT NULL,

b int NOT NULL,

PRIMARY KEY CLUSTERED (a)

);

CREATE TABLE #work_randomized (

a int NOT NULL,

b int NOT NULL,

PRIMARY KEY CLUSTERED (a)

);

--- Load #work

INSERT INTO #work (a, b)

VALUES (1, 1000);

WHILE (@@ROWCOUNT<16000000)

INSERT INTO #work (a, b)

SELECT (SELECT MAX(a) FROM #work)+ROW_NUMBER() OVER (ORDER BY (SELECT NULL)),

(SELECT MAX(a) FROM #work)+1000+ROW_NUMBER() OVER (ORDER BY (SELECT NULL))

FROM #work;

--- Load #work_reversed

INSERT INTO #work_reversed (a, b)

SELECT a, -b AS b

FROM #work;

--- Load #work_randomized

INSERT INTO #work_randomized (a, b)

SELECT a, 33500000*RAND(a) AS b

FROM #work;

All three tables have the same schema and index: a unique clustered index on the “a” column, which contains an ascending integer key, and a “b” column that is not indexed. The “b” column is the difference:

- #work.b contains another ascending key, offset by 1000, i.e. 1001, 1002, 1003, 1004, …

- #work_reversed.b contains a descending key, starting with -1001, -1002, -1003, -1004, …

- #work_randomized.b is random.

How to test sort performance

I’m using this query with a window function that is ordered by “b”. This forces the query plan to use a Sort operator, because “b” is not the leading key of our index, so we can’t actually trust that it contains ordered data.

SET STATISTICS TIME ON;

SELECT *

FROM (

SELECT a,

LEAD(a, 1, 999999999) OVER (ORDER BY b) AS next_a

FROM #work

) AS sub

WHERE next_a-a=99

OPTION (MAXDOP 1);

I’m using the WHERE clause just to prevent the query from actually returning a lot of rows, which would mess up our timings. Also, because parallelism can come into play when you’re working with larger datasets, and parallelism typically breaks the sort order and introduces a lot of other performance noise, I’ve made sure that the query runs single-threaded.

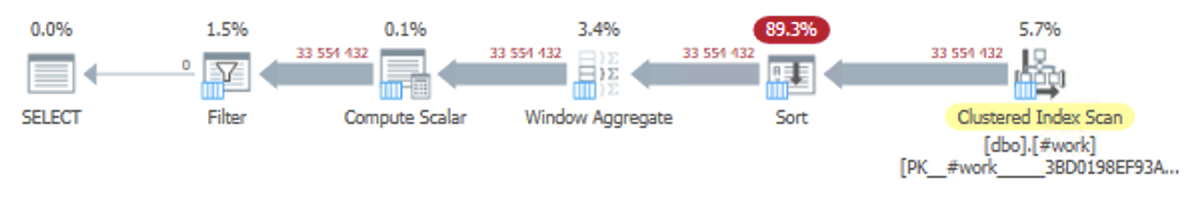

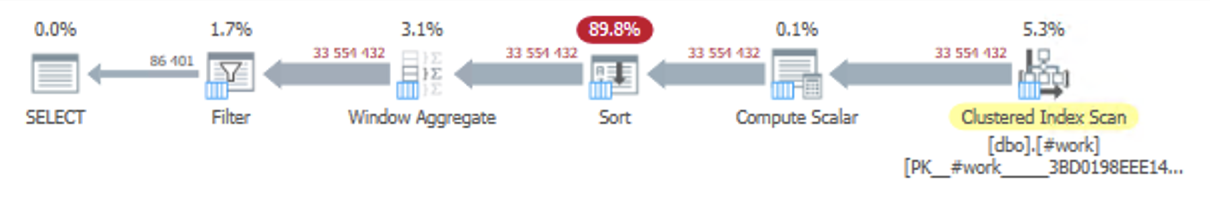

Here’s what the plan looks like for all of the test queries:

Performance

These measurements were all taken with a “warm” buffer pool, i.e. all of the tables were already in RAM, so disk speed is not a factor. Every query was run ten consecutive times to get a good average. CPU time and elapsed time never differed more than 15 ms. for each execution because there was essentially no I/O and no rows to return to SSMS.

Baseline: pre-sorted, indexed

As a baseline, the test query will run for about 2000 milliseconds when the ORDER BY is on the leading key of the clustered index, “a”, because there’s no need for a Sort operator at all. This is basically the cost of the Clustered Index Scan, the Window Aggregate, the Compute Scalar and the Filter operations.

Pre-sorted, not indexed

In the #work table, the contents of “b” appear to be ordered when loaded from the Clustered Index Scan operator, but SQL Server doesn’t know this of course. This query takes 5060-5083 milliseconds to complete.

Reverse-order sorted, not indexed

In the #work_reversed table, the contents of “b” are ordered, but in reverse order to “a”. I was not surprised to find that this query takes only a tiny amount longer to run than the previous one, 5106-5167 milliseconds.

Randomized

At this point, I had pretty much made up my mind that there isn’t a significant difference to sorted vs. unsorted data, so I was just expecting the randomized dataset to confirm my hypothesis. However, the randomized data consistently clocks in at around 7030-7059 milliseconds, which is significantly longer.

So what’s going on here?

If you consider that the actual Sort operation only runs for about 3000 milliseconds – the Scan, Window Aggregate, Scalar and Filter operations using 2000 milliseconds – the difference stands out even more: Sorting the random data takes 65% more time than the other datasets. What also surprised me was that sorting the descending values add just 3% to the sort time.

I would make an educated guess that SQL Server has a number of built-in sort algorithms to choose from, and that at least one of them is very well suited to data that appears almost perfectly sorted, even if it is in reverse order. The only way to straighten out 33 million random rows, however, is still with brute force.

Real-world application

Obviously, the above example is very abstract, and in a real-world scenario, there’s no guarantee that the Index Scan will be ordered if the plan doesn’t need it to be. But there are some real-world application: often, a data stream will already be sorted in the query plan, just not in the correct order. If another column roughly follows that sort order, sorting the other column may be less expensive than if it was completely random. Think of a function on an ordered column, for instance:

SELECT *

FROM (

SELECT dt, LEAD(dt, 1) OVER (ORDER BY dt) AS next_dt

FROM (

SELECT DATEADD(second, b, '2021-01-01') AS dt

FROM #work

) AS sub_sub

) AS sub

WHERE dt BETWEEN '2021-02-01' AND '2021-02-02'

ORDER BY dt

OPTION (MAXDOP 1);

Now, instead of ordering by the actual value of a column, we’re ordering by a function of the column. I specifically chose the DATEADD() function, because it “breaks” the sort order from SQL Server’s perspective, but in practice the sort order is preserved.

The outcome for the date function roughly mirrors the phenomenon what we saw with the integers:

- #work: 8.4 seconds

- #work_reversed: 8.0 seconds

- #work_randomized: 11.0 seconds

I KNEW IT.

1 comment